The UMBC Cyber Defense Lab presents

More holes than cheese:

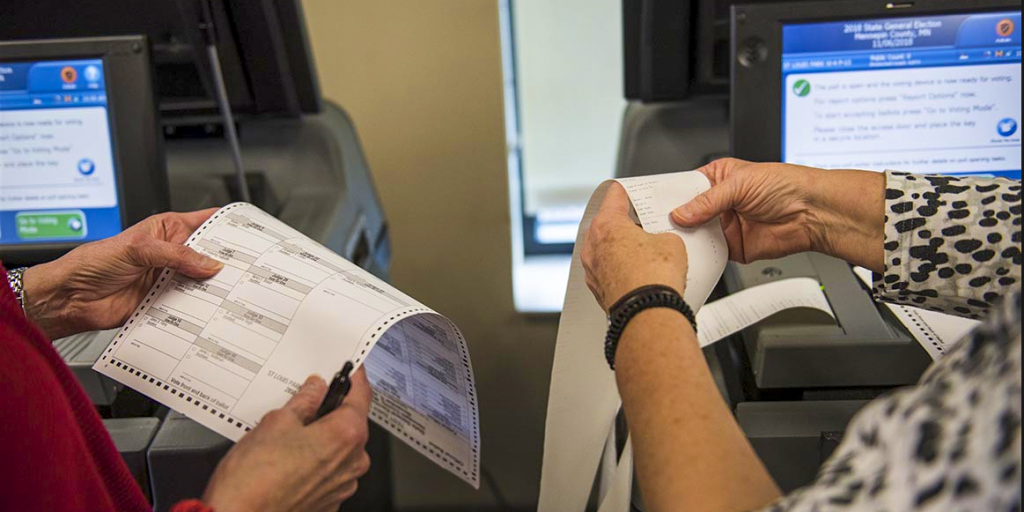

Vulnerabilities of the e-voting system

used in the 2022 French presidential election

Enka Blanchard

CNRS, Laboratory of Industrial and Human Automation, Mechanics and Computer Science, Université Polytechnique Hauts-de-France, and CNRS Center for Internet and Society

12–1 pm ET Friday, 13 May 2022 via WebEx

(joint work with Antoine Gallais, Emmanuel Leblond, Djohar Sidhoum-Rahal, and Juliette Walter)

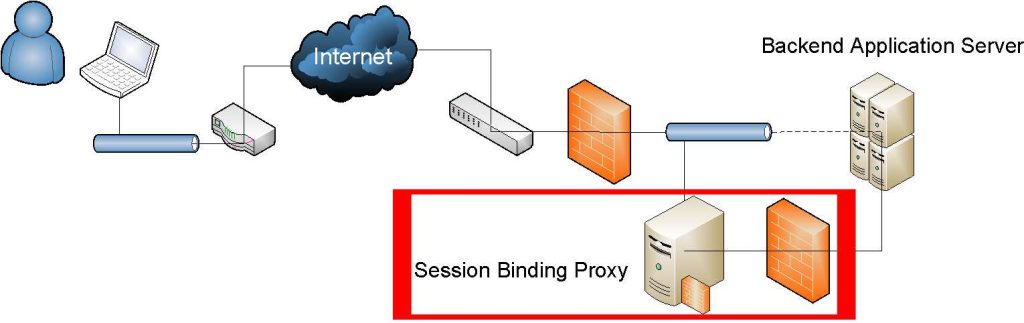

This talk will present the first security and privacy analysis of the Neovote e-voting system, which was used for three of the five primaries in the French 2022 presidential election. Based on information gathered by a whistle-blower (now a member of the team) and analyses made by our team during the last online vote in January 2022, I will show that the demands of transparency, verifiability, and security set by French governmental organizations were not met. I will then propose multiple attacks against the system targeting both the breach of voters’ privacy and the manipulation of the tally. I will also show how inconsistencies in the verification system allow the publication of erroneous tallies and document how this arrived in practice during one of the primary elections. Finally, I will discuss the complex institutional and legal frameworks as well as the social considerations that allow systems like this one to flourish.

Dr. Enka Blanchard is a transdisciplinary permanent researcher working for the French National Centre for Scientific Research. A significant fraction of their work concerns the social and psychological aspects of security, especially when it comes to voting systems, on which they frequently collaborate with Ted Selker and Alan Sherman of UMBC. Prior to this, they were a post-doctoral fellow in the Digitrust Project of the University of Lorraine. Their research and contact information is available on their website: koliaza.com

Host: Alan T. Sherman, . Support for this event was provided in part by the National Science Foundation under SFS grant DGE-1753681. The UMBC Cyber Defense Lab meets biweekly Fridays 12-1 pm. All meetings are open to the public. CDL meetings will resume in fall 2022.